How to Evaluate EdTech Solution's Quality?

April 22, 2021

How to Evaluate EdTech Solution’s Quality?

As the majority of schools globally went into remote learning mode in 2020, the use of digital learning solutions grew exponentially. When schools were rapidly adopting digital tools, we became aware of many occasions where the purchased tool didn’t meet with the buyers expectations.

Over the years EAF has conducted hundreds of quality evaluations of learning solutions and the EAF model has been utilized also outside of Finland. For example Mercator Foundation in Switzerland and ARQA-VET in Austria, operating under the Ministry of Education have run projects where local teachers use EAF model and Evaluation Platform to evaluate EdTech’s quality.

Of course, high quality is fundamental for both learning solution developers and buyers alike.

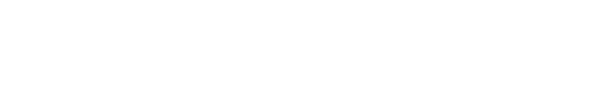

The purpose of this article is to describe it in detail how EAF EdTech evaluation process works. We trust that understanding the EAF model provides guidance and inspiration for setting up your own quality criteria for EdTech procurement and development.

Quick Facts About EAF Evaluation & Certification

-

EAF has currently 114 teacher-evaluators in Finland.

-

Far over 300 product evaluations have been made globally with the EAF evaluation model.

-

In the evaluation the product is mapped against:

1. Curriculum (can be any publicly available curriculum)

2. Learning science principles

3. Usability criteria -

If a solution aligns with the listed criteria, a conclusion can be made that the product provides pedagogically sound learning experience with relevant learning objectives.

-

EAF evaluation criteria is developed together with researchers from the University of Helsinki.

Pedagogical Understanding is at the Core

The backbone of the EAF evaluation system is the team of teachers who have gained a high level of pedagogical understanding through their education and work experience in the classroom.

Every evaluation is led by EAF’s evaluation administrator, who also guides teacher-evaluators in their work. This is an iterative process where the administrator verifies the assessment and reasoning of the evaluators’ scoring, gives feedback for them and may ask for further clarifications.

EdTech Evaluation is Hard Work – No Magic Bullets Exist

When we mention that EAF has developed a software for learning solution quality evaluations, people sometimes assume the evaluation is fully automatized and done by a machine.

The reality is that machines can’t evaluate learning solutions properly. In order to make the evaluation accurately and efficiently, it needs to be done by human beings with high-level pedagogical knowledge. There are no shortcuts or magic bullets. Evaluating the quality of a learning content is hard work, which requires commitment in auditing the material and assessing it with a science-based method.

Moreover, the quality of the evaluation work depends on the evaluator’s experience, hence every evaluator is building up their own competency over time when the number of conducted evaluations is increasing.

The reason why we need a ”human ingredient” is simply because when it comes to pedagogy, the solution’s unique educational purpose matters a lot. We can’t evaluate a solution’s quality, unless we have first defined 1) what is the ultimate goal for using the product or service 2) what learning context it's meant for. This can be done only by a person with pedagogical knowledge.

Since qualitative evaluation always includes some amount of subjectivity, each evaluation is conducted by a minimum of three teachers. Three evaluators ensure that all main problems are identified and bring a variety of viewpoints

Defining the Learning Objectives

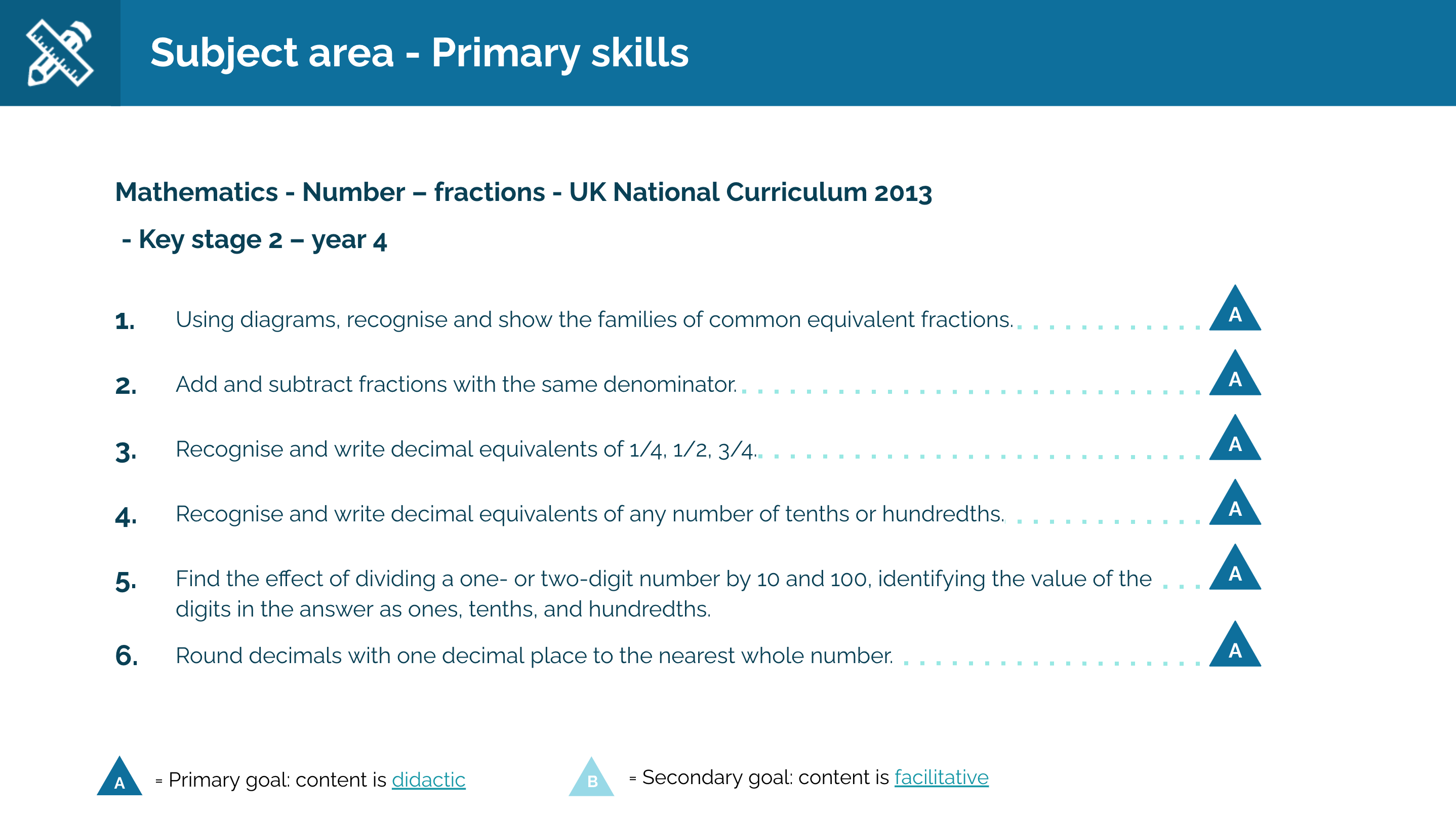

Each EAF evaluation starts with mapping the learning goals of the solution. This is most commonly done by using a reference curriculum and mapping the solution against that.

If the solution is targeted for professional development use, there isn't necessarily an obvious reference curriculum. In these cases, EAF team gets to know the solution first and then finds or compiles a relevant curriculum; for example from the university level.

With learning tools that don’t provide any content, the learning goals definition most commonly include pedagogical goals and goals for learning different skills. Pedagogical goals describe how the learning is supported, as for example, “the solution allows formative assessment”.

When sharing with EdTech developers how we evaluate solutions, we always recommend them to think about learning goals first, before designing a mechanic for a learning activity; take a look at the target market curriculum first and plan, which learning objectives should be supported with the solution. Next, move on to designing content and activities that align with the selected objectives. When a solution is created with this type of goal oriented approach, teachers and education sector decision makers will find it more convincing.

The Context of Use Defines What Pedagogy is Seen as Justified

As mentioned above, the use of pedagogical criteria depends on the solution’s learning goals. As an example, when learning foreign language words, it’s well justified to lean on drilling, repetition based activities. If the solution aims to increase understanding of how photosynthesis works, the same type of “quizzing until correct” is not an ideal approach. It can lead to remembering facts, but not necessarily understanding the phenomena. It would be pedagogically better justified to provide visual demonstrations, explanations and guidance to be able to explain the phenomena.

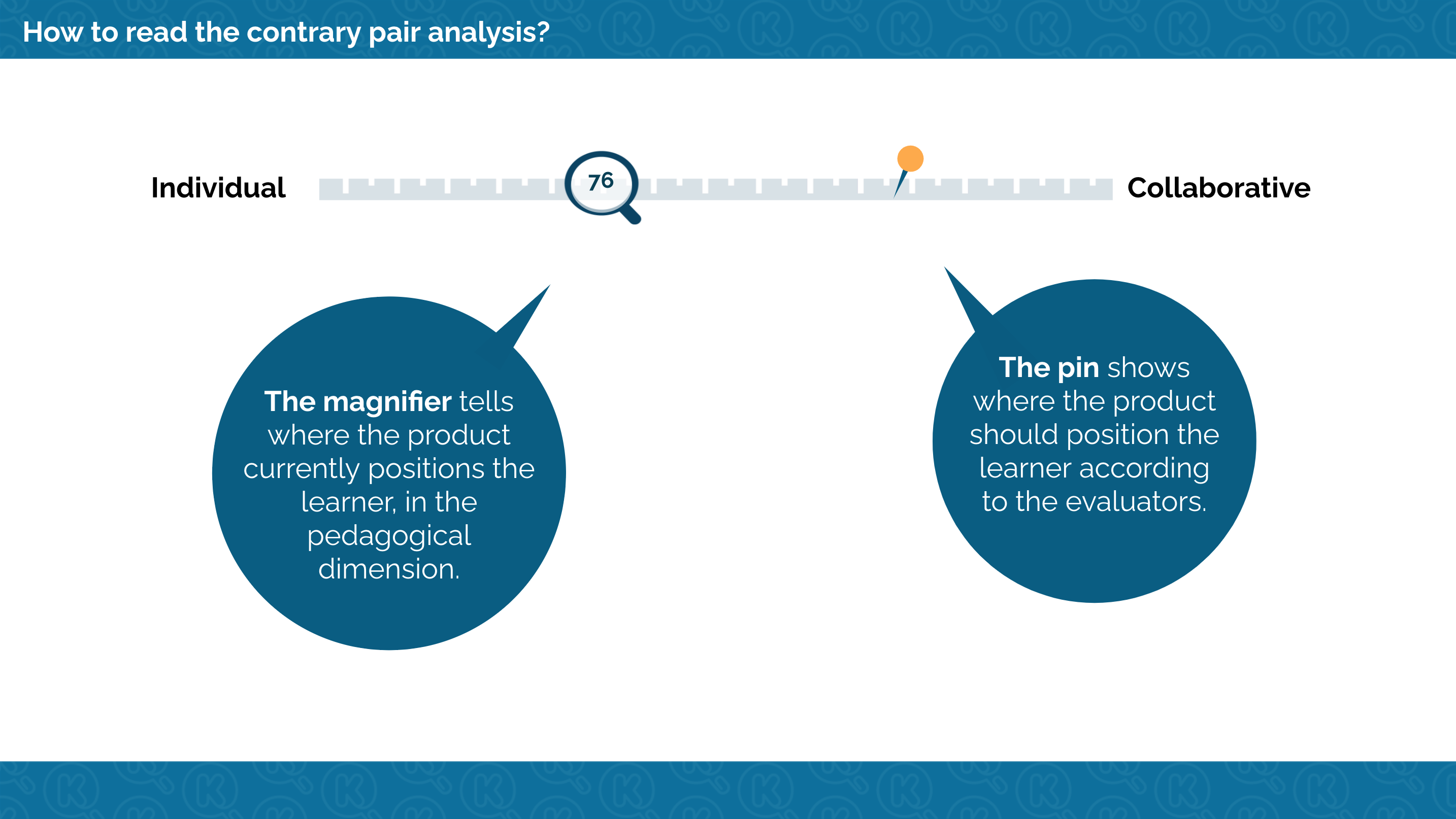

The EAF pedagogical criteria consists of four pedagogical dimensions:

- Passive OR Active learning

- Individual OR Collaborative learning

- Linear OR Non-linear learning

- Rehearse-based OR Construct-based learning

(Hietajärvi, L. V. O. & Maksniemi, E. I., 2017, Education Alliance Finland.)

Neither end of the scale is good or bad, positive or negative in all cases. It always depends on the learning goals, which approach should be favored more. Many good solutions include elements from both ends of the scale.

The direction for development suggested in EAF report depends on the evaluators’ professional perspective. The suggestions are formed through reflecting the activities against the solution’s learning goals. Since there are four people altogether evaluating the solution independently, it guarantees the validity of the findings and development suggestions.

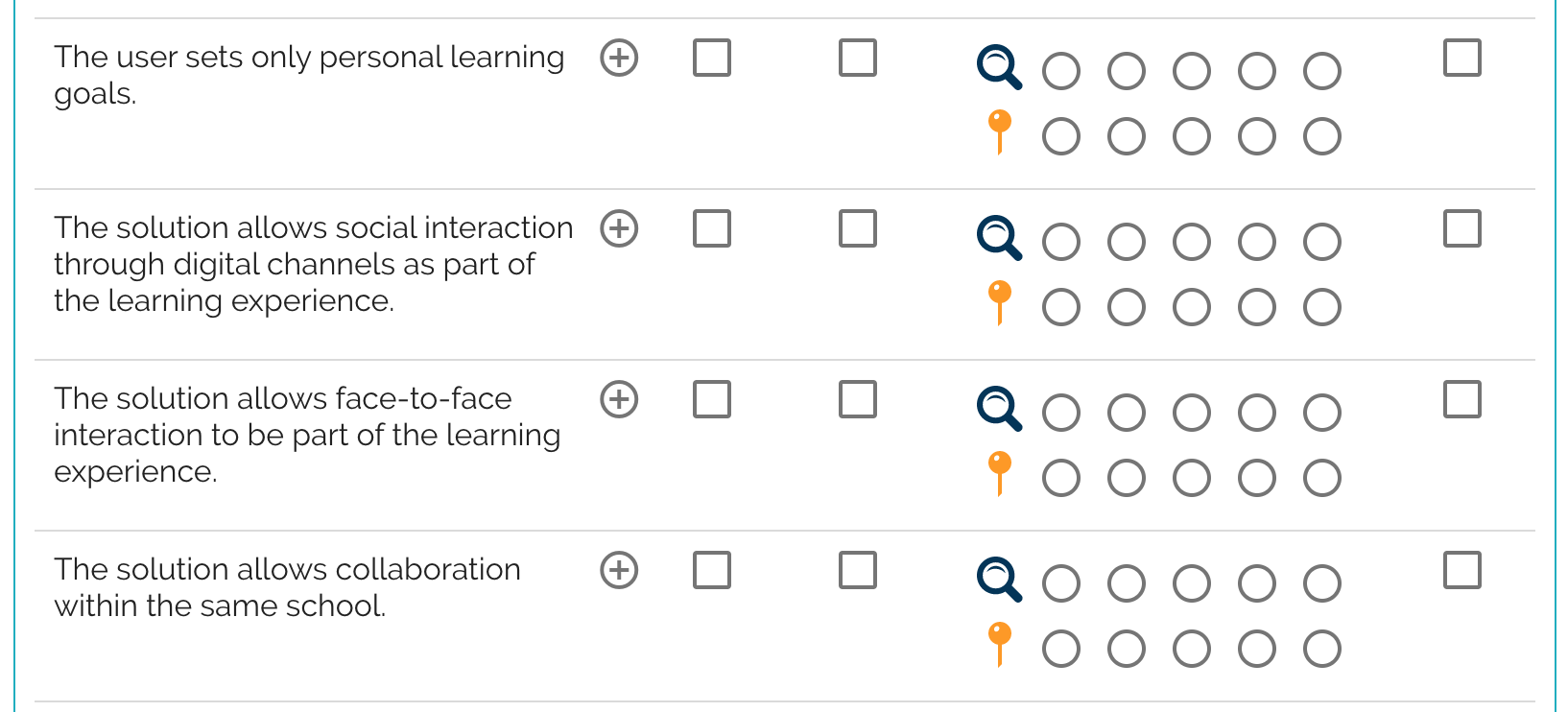

The pedagogy of the solution is not only defined by direct learning activities or content delivery. What also matter for example is:

- Does the solution allow reflecting own progression (analytics, reward-system, feedback)?

- What media (text, video, audio) are utilized?

- Does the solution allow social interaction?

- Is the content adaptive?

- What data the educator has access to?

- How is the educator allowed/guided to communicate with the student?

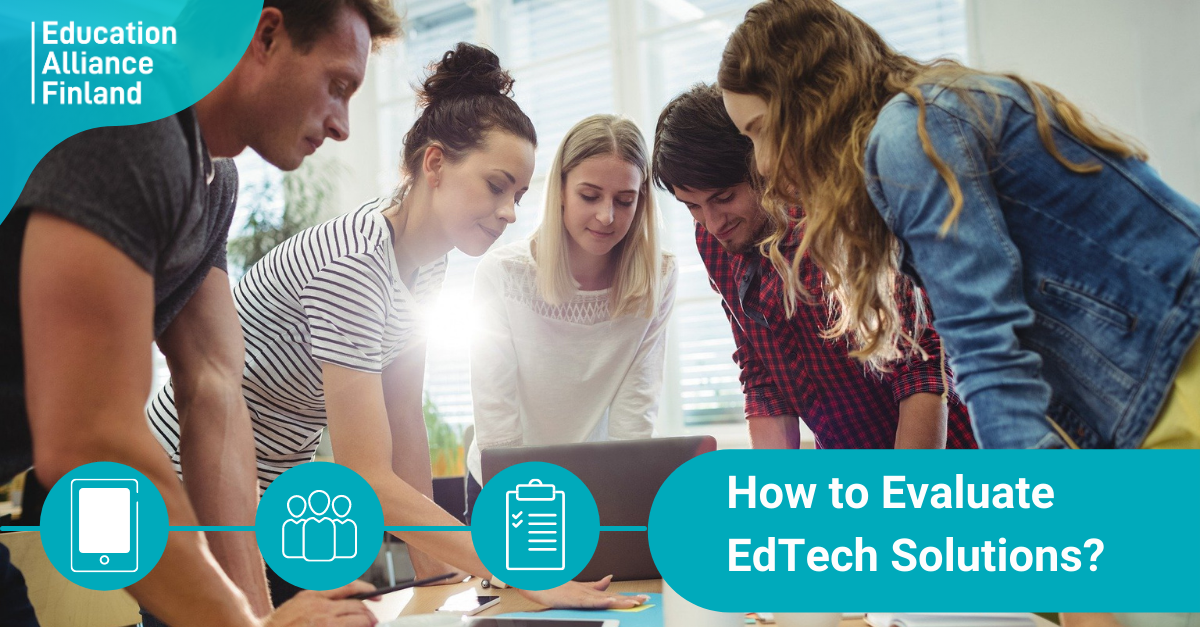

Evaluators are replying to 100+ statements regarding product's pedagogy & usability.

Academically Sound & Globally Accepted

The above listed aspects are key elements that define solution’s pedagogy. And when assessing the pedagogy, each of the four pedagogical criteria are broken down into 20-30 very specific statements. The role of each statement is to track design elements that define what pedagogical approach the solution uses.

Similar statements can be used to either guide solution development work, or when evaluating a solution that you’re about to take in use.

Our pedagogical criteria is developed by educational psychologists and the aim was to come up with a criteria that is academically sound, globally accepted and suitable for a variety of solutions.

We are happy to notice that the EdTech companies that have gone through our evaluation, use these pedagogical aspects as a guideline when developing new content and learning activities.

Product Usability Affects In the Educational Impact

A high-quality learning solution requires more than valid learning goals and pedagogically solid features. It also needs to be an enjoyable user experience. A base for this lies in the usability of the solution, because if the teacher or student struggles to use the tool, it is known to lead to lower engagement and learning outcomes.

When evaluating the usability and user experience from the learning point of view, customer support and provided training for use of the solution are in key roles. An EdTech evaluator should also consider these aspects, when assessing the educational quality.

Usability Evaluation Criteria

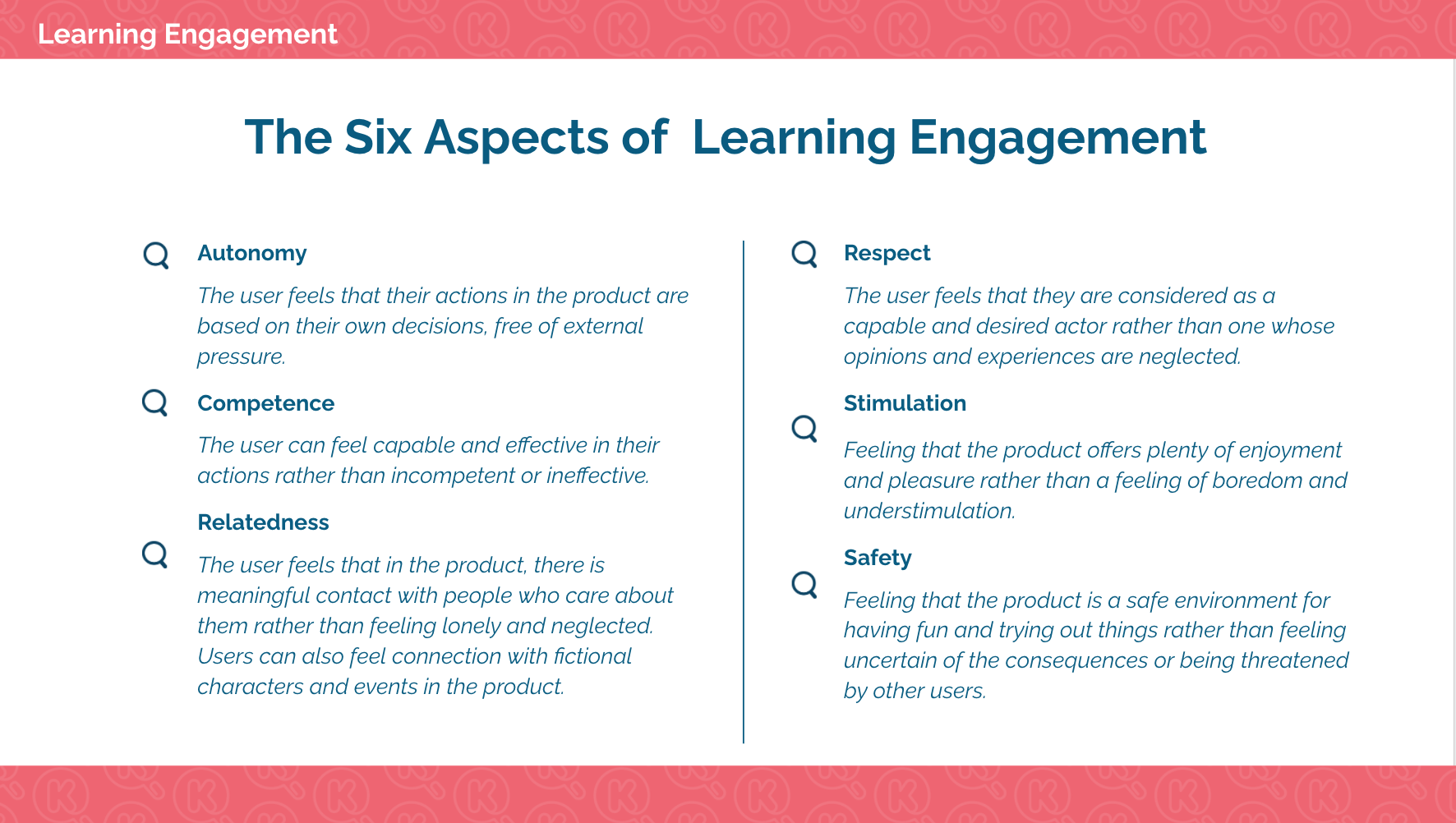

In the EAF evaluation the usability evaluation is a part of our assessment of Learning Engagement.

In the assessment we look at the solution’s design through six aspects that are known to affect the user’s engagement with the learning activities;

- Autonomy

- Competence

- Relatedness

- Respect

- Stimulation

- Safety

The criteria is used similarly to the pedagogical approach assessment. Each of the aspects are broken down into specific statements that track the design elements that affect for example on the user's feeling of autonomy, competence or relatedness.

The evaluation report points out the strengths and development areas in the engagement design. Very often good pedagogy correlates with good engagement. However, major usability or technical issues that for example compromise user’s privacy or make the system impossible to use will lead to rejection of the certification. These are listed in the report and the certificate is granted once the found issues have been fixed.

Each Certification Requires Rigorous Reasoning

Since EAF evaluations are done for certification purposes, it is crucial to have the evaluators rigorously reason out their answers to the statements. That is EAF’s responsibility regardless if the solution is certified or not.

Source:ViLLE Math evaluation (Permission: Learning Analytics Center, University of Turku)

In the case of certification, the reasoning works as a justification for why the solution was granted the certificate with. If the solution is not certified, the feedback needs to thoroughly explain, what are the found issues in the solution’s design and how those could be fixed. We trust that the value of the certification process lies in the rigorous, systematic feedback that supports the development work far in the future.

Benefits of EdTech Evaluations

As previously explained, if EAF sees that the evaluated solution provides 1. relevant learning goals, 2. pedagogically justified activities, 3. fluent user experience, a conclusion can be made that use of the solution will lead into positive learning outcomes. Naturally, that happens only if users are utilizing the solution in a meaningful way.

Since learning experience has several variables affecting the outcomes (teacher's competency, quality of devices and connections, students' preparedness, etc.) one of the most trustworthy ways of assessing the impact of an EdTech solution is through deep product analysis. Evaluations are also faster to conduct and more agile compared to traditional efficacy research (RCTs), hence they can more easily provide valuable information to support product development work.